Have you ever had the feeling that behind AI there was something magical, almost esoteric, accessible only to a chosen few?

Headlines scream about intelligence that writes like a human, about threats to programmers, about an already decided future.

Inside you, that tension between curiosity and fear grows: what if it were true?

What if the machine is really taking my place?

Stop for a moment.

The truth is that therès no magic, no consciousness, no thought.

There are tools, just like in a toolbox.

And you, as a developer, know well that tools, however sophisticated, only work if someone knows how to use them.

AI isn't a spell, it's a precision laboratory made of pieces that fit together, like gears working silently.

Herès the promise: by the end of this article you'll be able to look at a language model without reverential fear, recognizing every mechanism for what it is.

Not philosophy, but concreteness.

Not smoke, but tools you can learn to handle.

I myself started with doubts similar to yours.

After over 25 years as a software architect, after creating and selling successful companies and training hundreds of developers, I understood one thing: hope is not in vain.

If you learn to know the hidden gears, you'll have a huge advantage over those who merely suffer the revolution.

This is the news I give you: language models don't think, don't dream, don't create.

They calculate.

And they do it through tools that anyone can understand, if shown the right way.

It's like discovering that the magician has no powers, just a set of well-trained tricks.

And that's why today I'll guide you inside the invisible box that makes language models work.

Piece by piece, tool by tool, until you too can see what's really under the hood of artificial intelligence.

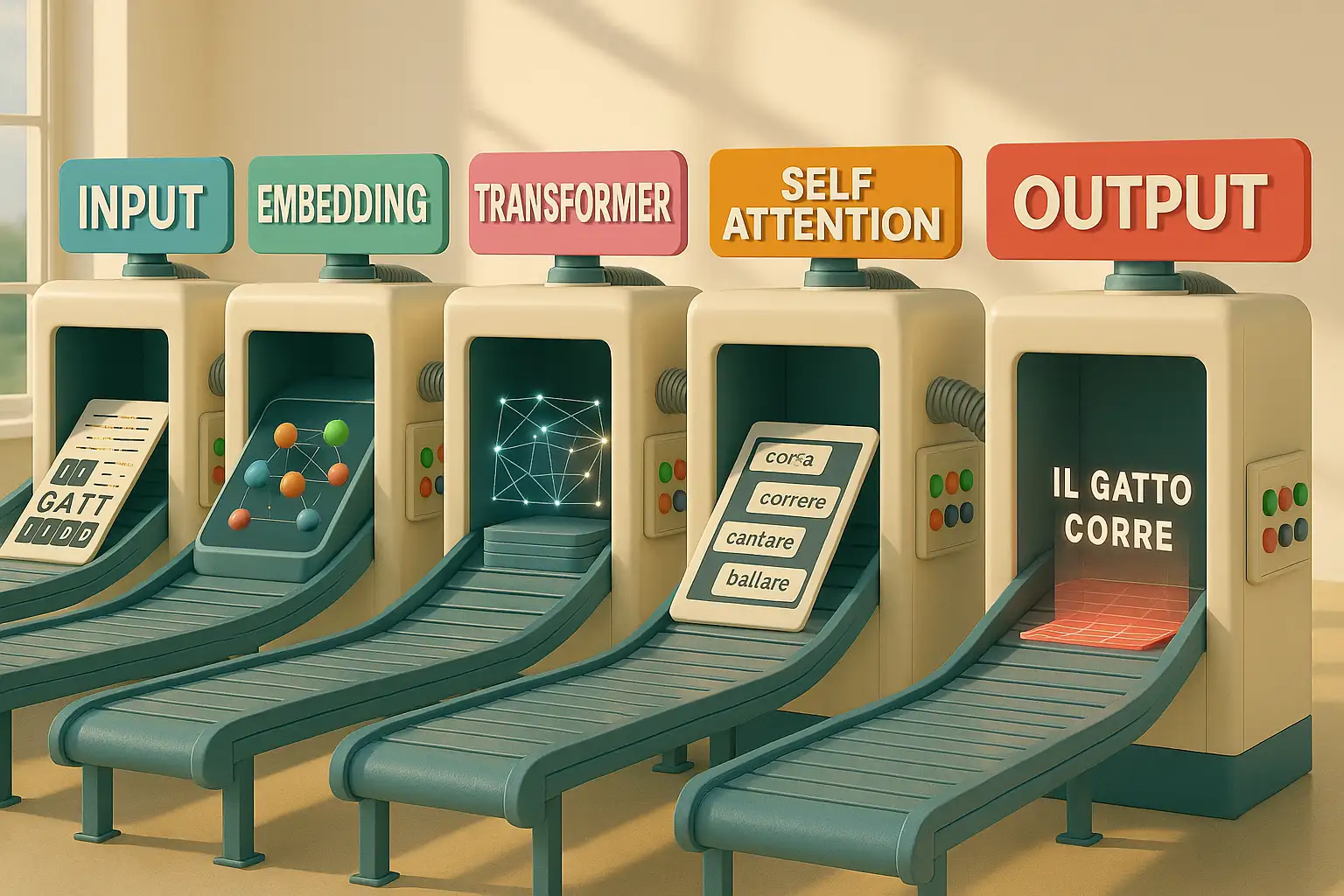

How word embedding really works

Imagine opening the toolbox and finding a tape measure.

It seems trivial, but without it you can't measure anything, you can't build anything.

Embedding is exactly this: measuring the distance between words.

It no longer matters that they're made of letters or meanings, what matters is only how they position themselves in a numerical space.

Each word becomes a point on an invisible map:

- "King" and "queen" end up close because they share many characteristics

- "Dog" and "cat" are found at a short distance

- "Car" and "banana" get lost far away in numerical space

It's not understanding, it's geometry.

It's as if every term transformed into coordinates that you can plot on an infinite grid.

Why is it needed?

Because models don't read like we do: they compare numbers, calculate relationships.

Without this invisible measure, text would be just chaos.

With embedding, instead, the machine has a criterion to say: "these two words are similar, these two aren't".

And that's where it starts to build.

You don't need to believe in magic: just understand that everything starts from a simple tool.

A tape measure that doesn't measure centimeters, but numerical meanings.

Without it, the toolbox would be empty.

With it, you have the first step to dismantle the myth of artificial intelligence.

Why tokenization breaks text in strange ways

Suppose, now, you pick up a cutter.

It's not for measuring, but for dividing.

Tokenization is this: a clean cut that divides text into smaller pieces.

But don't expect the cuts to follow the rules you're used to.

It's not certain the blade will stop at the end of a whole word: sometimes it divides it in half, other times it breaks it into roots and suffixes.

The reason is simple: the model doesn't reason with the same units we use.

It needs more manageable segments, recurring and easy to combine.

A bit like when you disassemble furniture: you don't divide it into huge boards, you separate it into screws, hinges, and panels.

Thus, tokens become standard pieces to reassemble in a thousand different ways.

For you, reading, "developer" is a whole word, with a compact meaning.

For the machine, instead, it might become "develop" and "er".

It's not an error, it's a functional cut: this way the model can handle huge texts with a reduced vocabulary.

Sure, it may seem strange to see words shattered, but it's precisely this fragmentation that makes infinite combinations possible.

The cutter doesn't worry about elegance: it only thinks about making pieces small enough to be reused.

That's what tokenization does.

Without them the model would remain stuck, unable to work on language efficiently.

A division that seems brutal, but which is indispensable for building any sentence.

Don't be confused: you're not the one choosing them, but you can understand how they work.

If you learn to manage them, you transform this apparent strangeness into an advantage.

Want to discover how?

In the AI Programming Course I show you step by step how to make it become an ally.

The positional encoding that maintains sentence order

Think of having on the workbench a pile of wooden boards all the same.

If you don't put a mark on them, how do you know which goes first and which after?

Herès where the level comes in.

Not the one used to check if the floor is straight, but the one that allows you to maintain alignment.

Positional encoding works like this: it puts a mark on each piece, so that order isn't lost.

For the machine, without this mark, "The dog bites the man" and "The man bites the dog" would be identical.

The tokens are the same, but order changes everything.

It ensures that pieces fit in the correct sequence, like when you mount a shelf and you know that the longest screw goes at the bottom, not on top.

The trick is giving each position a numerical imprint.

So the model not only recognizes tokens, but also knows where to place them in the sentence.

It's not intuition, it's pure organization.

It's the way the system holds together the fabric of a discourse, avoiding it becoming a puzzle scattered on the floor.

When you watch text flow from a model, think of this invisible level working behind the scenes.

You don't notice it, but without it, every word would be a lost piece, unable to respect sentence logic.

Positional encoding is the guarantee that everything stays in balance.

Fine tuning and RLHF when humans correct the machine

Reopen the toolbox and you find a file.

It's not for building from scratch, but for refining.

Fine tuning and Reinforcement Learning from Human Feedback work just like this: they take a rough tool and make it more precise, polished, suitable for a specific task.

A model trained on enormous amounts of text is like a knife just out of the forge: it cuts, but roughly.

With fine tuning you specialize it: if you want an assistant for code:

- If you want an assistant for code, you train it on programming repositories

- If you want a model that answers legal questions, you expose it to legal texts

- If you want support for creative writing, you accustom it to stories and dialogues

Each step refines it, like file strokes on an irregular surface.

Then therès RLHF, the human correction that adds another level.

Here wère not talking about more data, but preferences.

Users evaluate responses: this one is good, this one isn't.

The model assimilates judgments and adjusts its behavior, learning to seem more natural, more useful.

It doesn't become more intelligent: it aligns better with what we expect.

For you as a developer, the lesson is clear: the machine doesn't improve by itself, but through human work that intervenes, corrects, shapes.

Every time you see a model respond better than yesterday, imagine a file that has smoothed the edges.

It's an artisan process, even if masked as futuristic technology.

Context window the limit of short-term memory

Every toolbox has limited space.

You can put many tools in it, but not all: sooner or later you have to choose.

The context window works the same way: it's the model's short-term memory.

No matter how big its network is, it must work on a defined number of tokens.

This means that, while writing, the model only remembers a certain stretch of discourse.

If the window is a few thousand tokens, texts that are too long get lost: the first sentences vanish, as if they were erased.

It's not an accidental defect, it's a structural limit.

To understand, imagine working on a project with a box of screws.

If you only have a hundred, you can fasten pieces up to a certain point.

If you need more, you have to reload.

That's what the model does: it has a maximum capacity, beyond which it can't go.

And for the developer this is important news: you can't expect AI to hold together an entire book without losing pieces.

You must learn to work with this constraint, breaking problems into smaller segments.

The context window is a reminder: even the most imposing system has its physical limits.

Every model has its limits, but those who know them can bend them to their advantage.

If you want AI to truly become your ally, you need to also know how to work around its short memory.

In the AI Programming Course I show you step by step how to do it, before your competitors do.

Why prompt engineering changes response quality

Pick up a screwdriver.

No matter how robust it is, if you use it badly you ruin the screw.

Prompt engineering is this: the art of using the tool the right way.

The model always responds based on how you instruct it, and question quality determines response quality.

Writing a prompt is like putting the tip in the correct groove; if you're imprecise, you slip and ruin the work.

If you're clear, you get a perfect tightening.

That's why two developers, using the same model, get opposite results: it doesn't depend on the machine, but on the hand guiding the tool.

Many believe it's enough to ask, but you know that every tool has its technique.

If you want to write code, you must specify language, context, and constraints.

If you want a summary, you must say length, tone, and purpose.

Every added detail is like a more decisive pressure on the screwdriver, bringing you closer to the desired result.

The model doesn't interpret your intentions: it follows the numerical instructions it receives.

And if you learn to handle the screwdriver of prompt engineering, you discover that the same machine that seemed useless suddenly becomes reliable.

This is competence in tool use, it's not magic.

Decoding the process by which the model decides what to write

Now imagine using a torque wrench.

It's not just for tightening, but for deciding how much force to apply.

Decoding works like this: it establishes, step by step, which word to generate.

Each token is not chosen by inspiration, but by calculation.

The model evaluates probabilities: among all possible options, which is the most suitable to follow the previous one?

Sometimes it chooses the most probable, other times it introduces variations.

It's like deciding how much to tighten a bolt: too little and it loosens, too much and you risk breaking it.

This invisible process is what transforms numbers into sentences.

Therès no intuition, but a sequence of probabilistic choices.

The more you know the mechanism, the more you realize that every written word is not the fruit of creativity, but of systematic calculation.

Understanding decoding puts you in the position of someone who can predict and influence model behavior.

No longer a spectator, but an artisan who knows where to apply force and where to leave space.

Every output becomes the result of well-calibrated tightening.

Greedy sampling and beam search three different ways to generate text

In the toolbox you find different drill bits.

They look similar, but each works in a different way.

That's what greedy sampling, beam search, and other generation methods do.

They're strategies for deciding how to explore possibilities when the model writes.

Greedy sampling is the fast bit: it always takes the most probable option, without hesitation.

It's quick, but risks producing monotonous texts, always the same.

Beam search is more cautious: it explores multiple paths simultaneously, evaluates different sequences and then chooses the best.

It's slow, but produces more refined results.

There are also intermediate techniques, which balance speed and variety.

For you, as a developer, this means not all model responses are born the same way.

Changing strategy is like changing bit: it may seem like a detail, but it influences the entire work.

Knowing these differences frees you from the illusion that generated text is always inevitable.

In reality, behind every line there are precise choices, and you can decide which tool to use to get the effect you seek, just like an artisan who changes bit to adapt to the material.

Behind every sentence generated by AI therès no magic, but mathematical choices you can guide.

If you learn to handle these levers, text will no longer be random but under your control.

Want to learn the techniques that make the difference?

This is the right moment to do it with our AI Programming Course

Temperature and top k the knobs that regulate creativity and control

Now you have before you a drill with two knobs: one regulates speed, the other precision.

Temperature and top k are these knobs.

They don't create anything by themselves, but radically change how the model generates text.

Temperature establishes how much the model should risk:

- Low temperature: chooses more probable options and produces safe but predictable texts

- High temperature: introduces variants, sometimes brilliant and creative, other times incoherent

- Intermediate values: balance stability and originality, modulating unpredictability

It's like increasing drill speed: more thrust, more surprises.

Top k, instead, limits choices.

It tells the model: "consider only the k best options".

It's the control that reduces chaos, narrowing the field.

The more you tighten, the safer the sentence becomes, but less creative.

It's the precision knob.

Playing with these settings means deciding what kind of text you want.

Do you want stability or inventiveness?

Do you want a technical manual or a fantastical story?

Every adjustment takes you to a different result: it's not randomness, it's control over tools.

And it's here that the developer can truly guide AI, instead of suffering it.

Why in the end AI is just calculation and not thought

Close the toolbox.

You look at the tools one by one: tape measure, cutter, level, file, screwdriver, torque wrench, bits and knobs.

They're tools.

Precise, indispensable, but devoid of consciousness.

None of them knows what you're building.

None reflects.

And the same is true for AI.

Behind the facade of fluent sentences therès no thought being born, but a set of calculations flowing.

It's powerful, yes, but it's a mechanism.

And like any mechanism, it only makes sense in the hands of those who know how to use it.

For you, as a developer, this is not a threat.

It's an opportunity.

It means you can understand AI, dismantle it, handle it like every other tool you've encountered in your career.

You're not facing a substitute, you're facing a new toolbox.

And the difference will always be made by who puts their hands on it.

Finally, you've seen how the "toolbox" is made inside: no magic, just calculation.

Now you have two roads.

Remain a spectator, continuing to look at AI as a mystery advancing... or enter the workshop and learn to use it to write code, design solutions and lead change instead of suffering it.

You have no more excuses.

The time to decide is now.

Every day you wait, others learn to handle these tools and build their future.

You risk being left behind.

The good news is you can learn to manage it: with the AI Programming Course you transform doubts into concrete skills and start using AI with confidence.

It's not a theoretical manual course, but a path that immediately puts the right tools in your hands, teaches you to use them and takes you to the other side of the barricade: from those who suffer the revolution to those who lead it.

My course is your only weapon to turn the situation around: it doesn't promise you theories, but takes you directly inside AI's mechanism and teaches you to operate it correctly.

Don't click if you're content being a spectator.

Click only if you're ready to take your career into your own hands.