When we talk about artificial intelligence in the company, the first image that emerges in the minds of many entrepreneurs is that of a brilliant and fast system, able to answer any question in the blink of an eye.

A tireless ally, always ready to relieve the team of repetitive tasks, to increase margins, to give your business that advantage that everyone seeks and few manage to obtain.

But that's just the surface.

Behind the shiny facade, the truth is less spectacular and much more uncomfortable: most AI projects fail not for lack of technology, but for lack of foundation.

It's not the power of the model that determines the value, it is the solidity of the flow that feeds it.

And let me tell you one thing straight: this is not a technical article.

You won't find parameter lists, framework comparisons, or engineer-like diagrams.

Because we're not talking about a developer's toy, but about an investment for entrepreneurs.

And whoever leads a company does not think in terms of "model architecture", but in terms of ROI, margins, real return.

If you think that the secret to transforming your business lies in the raw power of the AI you choose, you are still at the stage where many are deluding themselves.

You've probably already seen the gold rush around ChatGPT, Claude, and the ever-larger and more sophisticated models.

Maybe you've even experimented with some of these tools.

At first it seemed like magic: “Wow, this AI does incredible things!”; then came the moment of truth.

When you tried to really integrate it into your processes, something got stuck.

The answers were not precise enough, they did not adapt to your language, your needs, your structure.

The costs were growing, and so were the errors.

And that initial excitement gave way to a familiar feeling: frustration.

Here's what no one tells you directly: the model is not the solution.

And that's not even the problem.

It's just a tool.

A hammer may be great, but if you don't have a solid design, you won't build anything lasting.

The difference is not in the force of the blow.

It lies in the design of the structure, in the order of the bricks, in the foundation on which everything rests.

The real revolution does not happen in the model, but in the flow.

It is in the pipeline, in the path by which information flows through your organization, is processed and transformed into operational responses and profit.

Without a clear system, GPT power is not an asset; it's just smoke.

Do you want answers that really work in your context?

Do you want an AI that knows your business, your documents and your processes like your best team knows them?

You need to stop thinking about the model as the solution and start thinking about flow.

Because it is the flow that transforms an expensive toy into a competitive lever.

It is the RAG pipeline, when it is well designed, that makes the difference between those who talk about AI and those who build a lasting advantage on it, between those who are enthusiastic about the technology and those who use it to generate margins real.

What RAG pipeline really means

Let's forget the acronym for a moment.

RAG is just an acronym, and acronyms often obscure what really matters.

What needs to be understood is not the name, but the logic behind it: how to allow an artificial intelligence model to talk about your company, your documents and your information with precision and relevance.

Many entrepreneurs hear the word "pipeline" and immediately associate it with something technical, obscure, to be delegated to someone else.

But the RAG pipeline is not a detail for developers, it is the economic backbone of any serious AI project.

It's what connects your company's internal knowledge to a system capable of returning answers useful, coherent and immediately spendable in terms of productivity and margins.

Imagine a giant corporate library.

Inside are years of documents, procedures, avoided errors, valuable insights, customer and process data.

Everything is there, but buried under the weight of disorganization, different formats and long and frustrating research paths.

Now imagine being able to ask her a question and receive a clear, contextualized and useful answer, as if the most expert person in your company was answering you.

This is, in essence, what a RAG pipeline does.

It's not about magic, it's about a logical system, built with method, which organize your knowledge intelligently and makes it available to the model.

Instead of a generic AI, you get intelligence trained on your reality.

Think, now, of a gold mine.

Without the tools to extract and process it, that wealth remains underground.

The RAG pipeline is just that infrastructure: it doesn't invent anything, but turn what you already have into an advantage immediate competitive.

And this is where many go wrong.

They start with the model, buy access, invest in attractive interfaces and expect fast results.

But they ignore the invisible part, the one that separates a project that dazzles in demos from one that produces value month after month.

Without a pipeline, AI is not an investment, but a cost disguised as innovation.

Focusing only on the model means losing control of it.

Responses become inconsistent, teams become irritated, times drag on; instead of speeding up, you slow down, instead of optimizing costs, you multiply them.

The real turning point comes when you flip the perspective: you don't have to ask yourself which model to choose, but which flow to build to ensure that any model becomes a multiplier of value.

The RAG pipeline is just that: a system that takes your knowledge, structure and makes it accessible and usable with surgical precision.

Those who understand this principle don't just use AI: they govern it.

And when you govern it, it stops being an experiment and it becomes a production tool.

Of course, understanding the concept is not enough.

Building a solid pipeline is what distinguishes those who talk about AI from those who turn it into a stable competitive advantage.

While many competitors chase the latest trendy tool, those who work on flow conquer market shares, accelerates decision-making processes and frees up internal resources for what really matters: growing the company.

How to prepare documents for the pipeline

Why do so many companies fail to build effective AI pipelines?

It's not the model's fault.

The real fracture opens much earlier, at the point that too many jump lightly: data preparation.

Every entrepreneur knows that the quality of a process arises from the quality of the inputs.

If you feed an AI system with messy, redundant or obsolete documents, you won't get brilliant answers, but slowness, ambiguity and risks.

This is not a technical problem, it is an economic problem.

Every minute wasted fixing an error generated by dirty data is an operating cost real.

Corporate knowledge is already there, in forgotten archives, scattered emails, infinite versions of files and procedures stratified over the years.

But if it stays buried, it's just wasted potential.

Preparing documents for the pipeline is not just about “giving data to AI”, it means transforming a dormant asset into leverage strategic.

And here the first illusion falls: believing that "the model will fix everything".

He won't.

The model doesn't correct, it amplifies.

If the base is fragile, the whole system wobbles.

For this reason, the preparation of documents is not an accessory phase, but the most important.

This is where your return on investment is made or broken.

The process can be divided into three key steps:

- Inventory: It's not enough to know you have documents: you need to know what they contain, which are crucial and which are redundant. What is the official version of each procedure? Which documents contain critical information? If there are three different versions of the same operating instruction, which is the valid one? Doing this work means regaining control over corporate knowledge and reducing future uncertainties.

- Clean: Many companies are shocked when they discover how much digital ballast they carry around. A significant portion of the documents are obsolete, incorrect or duplicated. Eliminating what isn't needed and updating what matters is an act of strategic clarity, not a technical task. Without cleaning, the pipeline is not a solid backbone, but a labyrinth full of noise.

- Structuring: The difference between a chaotic archive and an intelligent infrastructure is context. This is where metadata comes into play: who wrote that document, when, for which department, whether it is still valid, what the official version is. These links are what transform a simple file into a useful node in the pipeline, capable of providing consistent and reliable responses.

When this phase is performed rigorously, the transformation is tangible.

Teams become more autonomous, onboarding becomes shorter, errors are reduced, customers perceive greater consistency.

And above all, AI stops being a gadget and it becomes a production machine which generates real margins.

Those who underestimate the preparation of documents believe they are saving money.

In reality, a grave is being dug.

Those who invest time and method in this step instead build the basis on which the entire pipeline can really work.

And it is precisely there that the competitive advantage arises: not in the model, but in the quality and intelligence of the flow that fuels it.

There is no artificial intelligence capable of saving you from confusing data.

If you want solid results, you need a solid foundation: preparing your documents methodically is the first step to transforming chaos into real margins.

In Programming course with AI you learn to build this foundation with precision, so that technology works for you, not against you.

Start now, before your competition does it for you.

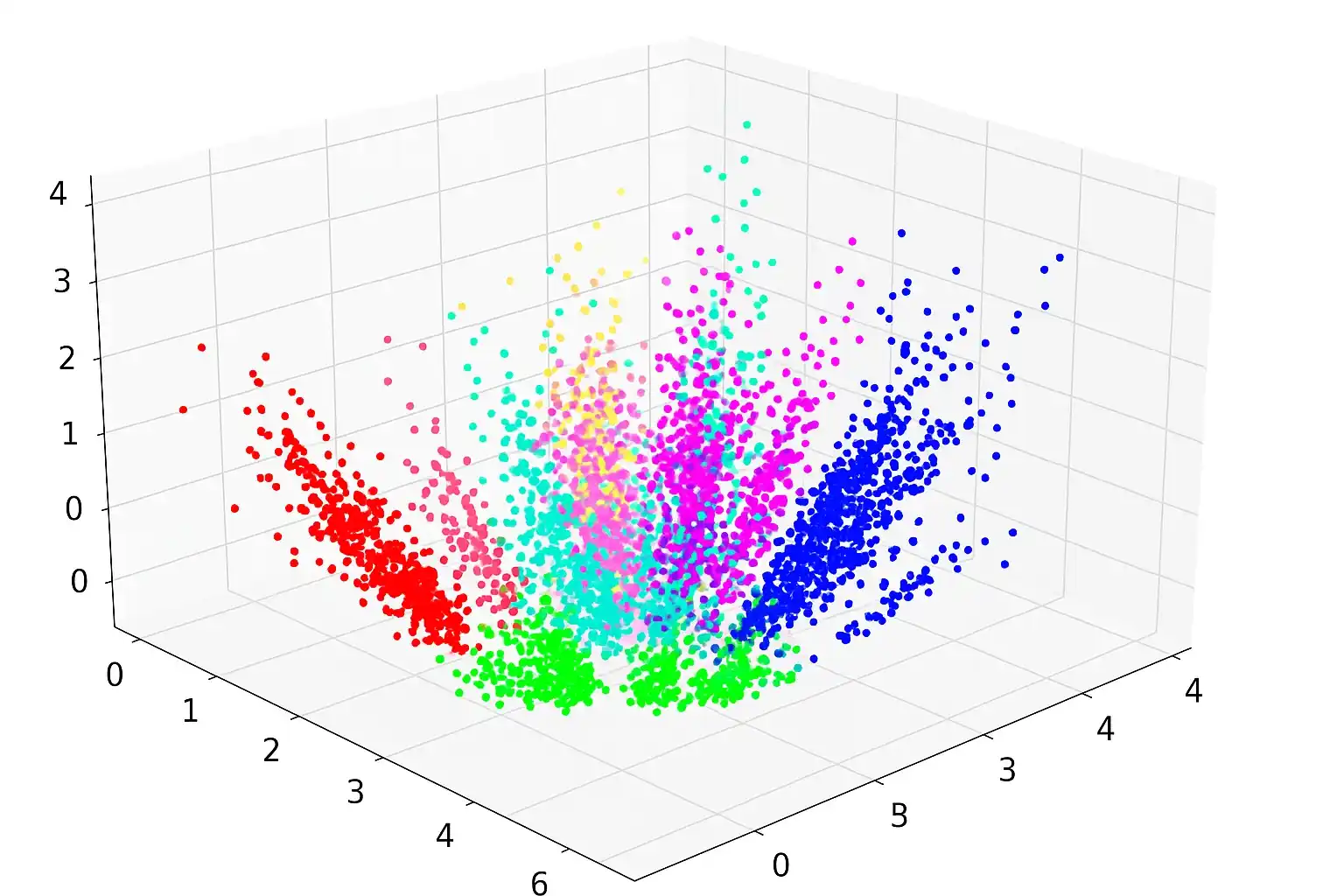

Generate embeddings correctly

This is the most underrated phase of the entire pipeline, yet it is the one that decides whether AI becomes useful or remains an exercise in style.

Embeddings are not a technicality to be left to developers: they are the way the system understands your content.

In practice, they transform documents and questions into a form that the machine can compare precisely, so as to retrieve what it needs and construct answers relevant to your context.

Imagine following a map with the wrong distances: even with the fastest car, you would never get where you want to go on time.

The same happens with embeddings.

If they are inaccurate, everything else falls apart: vague answers arrive, the team loses trust, customers get nervous, costs increase without any real benefit.

What does “doing them well” mean in business terms?

It means making sure the system really understands your language, your use cases, your operational priorities.

It's not about numbers and metrics: it's about consistency.

The same logic with which you transform documents must apply to applications; the template you use must be suitable for your content; the granularity must allow the right information to be isolated without noise.

In other words: “Are we creating the conditions for the machine to correctly interpret what we want it to interpret?”.

Many companies skip this step and rely on generic settings designed for “a little bit of everything.”

The result is abstract intelligence that doesn't speak the language of your business.

However, when the conversion of knowledge is handled methodically, something very concrete happens: the answers become predictable.

And when a technology is predictable, you can plan, measure, scale.

You're no longer playing with AI: you're building an operating system for your business knowledge.

Treating this phase with the same discipline with which you set up a financial or commercial process makes the difference.

All information must be converted cleanly, contextualized and made comparable.

Think of embeddings as strategy translators: if they translate well, AI works for you; if they translate poorly, it exposes you to costly mistakes.

This is where the real competitive advantage comes from: customizing the conversion process to suit your needs. It is not a marginal detail, it is the borderline between a pipeline that "works" and one that shifts the margins.

When the system recognizes nuances, internal synonyms, specific terminology and your company's operational priorities, each answer becomes a useful piece, not an attempt.

It is in this alignment that AI it stops being an expense and starts producing returns.

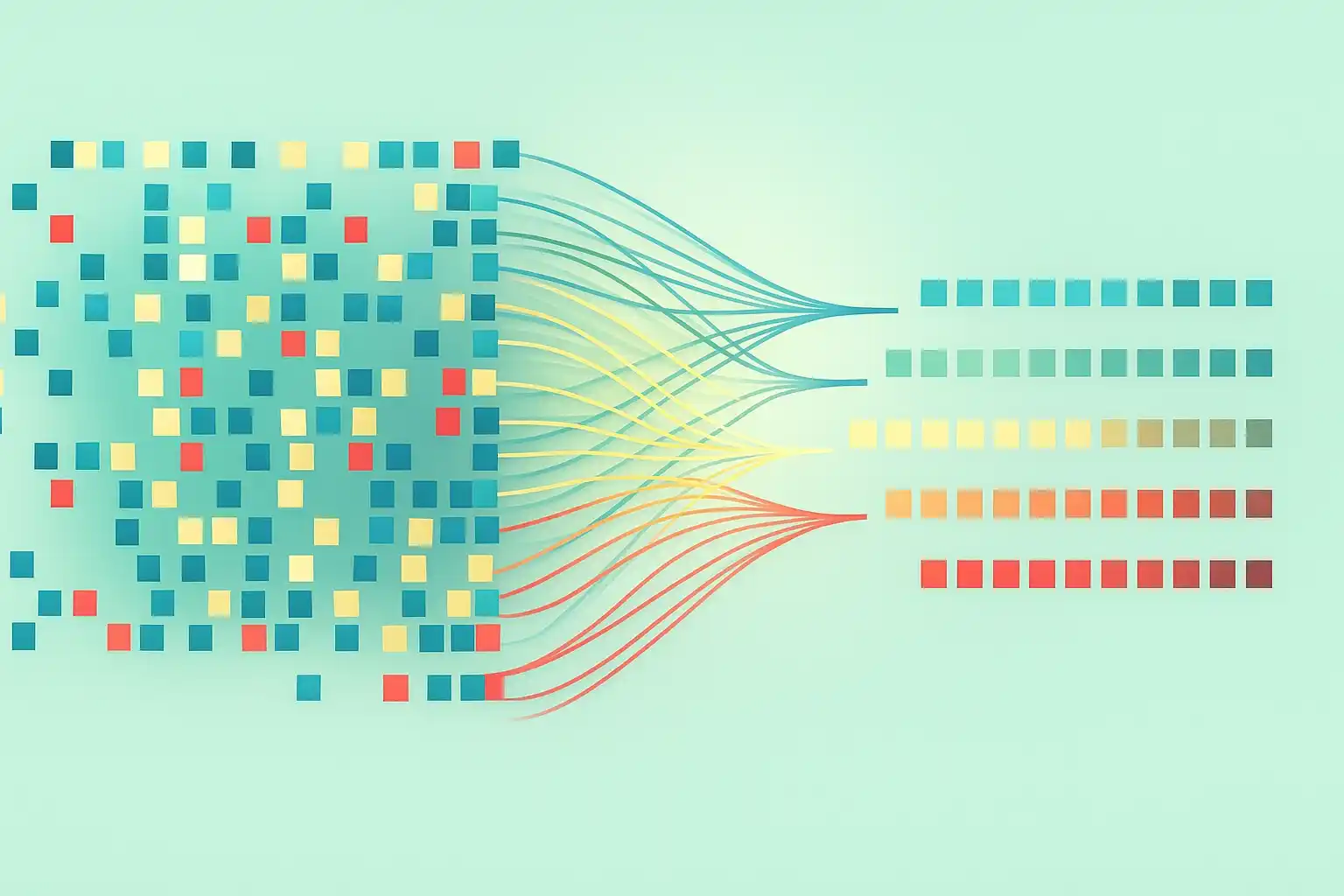

Store embeddings in Qdrant

Once the knowledge has been transformed into structured data, the next step is keep it so it stays alive, accessible and tidy.

Archiving does not mean "putting aside". It means creating a strategic corporate memory, capable of supporting every future decision.

And this is precisely where many companies lose control.

They treat this phase as a technical detail, when in reality it is a lever of efficiency and competitiveness.

Filing badly means turn every search into a maze.

A labyrinth costs: wasted time, growing frustration and processes that slow down.

Storing well, however, means building an intelligent memory that returns exactly what is needed, when needed.

Anyone who has experienced information chaos knows this: hours of work are lost every day in micro-activities that do not produce value.

Teams blocked because they cannot find the right documents, collaborators who improvise due to lack of clarity, critical information that is dispersed in a thousand versions.

This is not a minor annoyance.

It's a cost that erodes margins and slows growth.

The solution is to build a strategic archive, not a cemetery of forgotten files.

Qdrant, or any other vector database, is not the solution itself, but the tool that allows you to give structure to your knowledge.

Not archives to accumulate: archives to make every information exploitable with precision.

Qdrant has one key feature: it stores millions of embeddings and returns them in milliseconds.

But it's not enough to just "use" it.

A clear logic is needed to prevent speed from becoming chaos.

And here two fundamental strategic considerations come into play:

- Data Consistency: What happens when you update a document? Are embeddings updated automatically? Are older versions handled correctly? Data consistency is not a technical detail: it is an operational imperative. If you lose alignment, everything you build on top becomes fragile.

- Archive reliability: If your database structure is weak, you risk losing critical embeddings or recovering old, inconsistent versions. Any error at this stage propagates through the rest of the pipeline, resulting in bad responses and compromised decisions. An untrusted archive undermines trust in the entire system.

When you correctly implement a vector database like Qdrant, you are building a solid infrastructure, similar to a bank for your business knowledge.

No more money hidden in a drawer, but a system with backup, redundancy, speed and security.

That's why I insist: flow matters as much as pattern.

A brilliant model, fueled by slow, old or inconsistent data, is worthless.

On the contrary, a well-designed archive turns every search into a shortcut towards reliable answers.

You're not just saving time: you're reducing risk, increasing the quality of decisions, and building a foundation of trust throughout your AI ecosystem.

When your corporate memory works, stop chasing information and you start to govern them.

And it is at this moment that AI stops generating chaos and becomes a source of operational and strategic clarity.

Every second wasted searching for information is money wasted.

Storing your embeddings strategically isn't a technical task – it's a competitive accelerator.

In Programming course with AI you learn to build and manage a solid corporate memory, so as to make faster and more precise decisions.

If you control your company's memory, you control the speed at which you make decisions.

Do it now, not when it's too late.

Integrate semantic search into your pipeline

Good: at this point your documents are ready, the embeddings generated and archived reliably.

The next step is equally fundamental: semantic search.

But what does this mean practically?

It means that when a user or application asks the system a question, the machine doesn't just look for identical words to those written.

It goes further.

Interpret the meaning, grasp the intention behind the question and retrieve documents that address the same concept, even if formulated differently.

This detail makes a huge difference.

People don't talk like computers: they use synonyms, variable constructions, different contexts.

A search engine based only on keywords ends up ignoring much of the information relevant.

With semantic search, however, the machine understands and adapts, finding precisely what is needed.

For an entrepreneur, this is not a fringe benefit: it means having an AI that stops functioning in a rigid manner and starts behaving like a capable collaborator.

A collaborator who doesn't need to know where the information is, but he knows how to find it in a few moments.

All it takes is a clear question, and the answer comes.

However, many companies remain anchored to a mechanical approach, based on keywords.

They search for literal terms, get confusing results, get lost in multiple versions of the same file.

It's a slow, expensive and frustrating system, which blocks workflows and burns margins every day.

And awareness always comes in the same way: when you realize that every minute wasted looking for documents is money wasted.

Collaborators become demotivated, processes get stuck, AI does not return any concrete value.

Instead, allowing the system to understand the meaning, not just the words, completely changes the game.

Semantic search turns the pipeline into a decision engine, no longer in a simple queryable archive.

Instead of searching, you talk to your business knowledge.

This drastically reduces downtime, shortens decision-making steps and frees up operational resources.

The hours saved each week they become thousands of euros a year recovered.

This is a powerful business lever.

With semantic search, every question receives a coherent and contextualized answer, teams become autonomous, processes flow more fluidly, decisions arrive sooner.

You're shortening the distance between demand and action, and it's that distance that decides who climbs and who falls behind.

Connect a template to generate responses

Now comes the grand finale: the moment when the generative model responds.

This is where everything takes shape, but this is also where many fall into the trap.

The model is not the heart of the pipeline, it is simply the final tool who uses what you make available to him.

Many companies are seduced by the apparent ease: an attractive interface, a few clicks, and the machine responds.

But those answers, if there is no structured pipeline behind them, are inconsistent, fragile and unusable on a strategic level.

They don't generate value, they only generate smoke.

And smoking, in the balance sheets, is worth nothing.

Disappointment comes quickly when you discover that the model alone solves nothing; on the contrary, it amplifies any hidden errors in the flow.

If the underlying information is wrong or incomplete, the answer will be equally wrong, even if apparently convincing.

The solution is simple but counterintuitive: the model should be connected after the pipeline has been built with rigor.

When you do it right, something powerful happens.

The system receives a question, transforms it into an embedding, searches the vector database for the most similar documents, retrieves the correct context and passes it to the model.

At that point, the model does not invent: it interprets.

Look at the context and generate a coherent response, specific and aligned to your business reality.

The quality of that response does not arise from the "magic" of the model, but from three fundamental elements, in this order:

- The quality of the documents you provided him. If the foundation is solid, every response will be.

- The consistency of the pipeline, from embedding generation to data recovery. It is the structure that guarantees reliability.

- The model itself, which is only the last link in the chain.

Yet, most companies focus 90% of their attention on the last point and almost none on the first two.

And this is why so many AI pipelines fail: not because the model isn't “intelligent” enough, but because it rests on weak foundations.

However, when everything is aligned, the answers become coherent, every interaction makes sense and is predictable, and AI stops being an experiment to become an operational asset.

The model is not the source of value; it is the tool that transforms what you have built behind the scenes into concrete answers.

The last step of a system built with method and coherence.

The model is not magic, it's an amplifier.

If you connect it to a carefully constructed pipeline, every response becomes an operational lever, not a gamble.

In Programming course with AI you learn to make models and flows speak as a single system, so as to transform AI into a strategic ally and not a risk.

Don't wait for technology to surpass you: govern it, and turn it into your most powerful ally.

Real examples of RAG pipelines

To truly understand the scope of a RAG pipeline, abstract schemes or definitions are not enough.

We need concrete examples, real cases in which the integration between corporate knowledge and artificial intelligence it generated tangible and measurable results.

A SaaS company running a business process platform had a recurring problem: the support team was overwhelmed with repetitive requests, and useful documentation was scattered across various files and archives.

Building a RAG pipeline it completely changed the scenario.

The documentation was cleaned and structured, then transformed into embedding and archived in Qdrant.

A generative model, linked to semantic search, made answers immediate, precise and coherent, regardless of how the question was phrased.

In three months average response times were reduced by 60%, customer satisfaction increased and the team was able to focus on higher value activities, freeing itself from the burden of repetitive FAQs.

A law firm with over thirty years of activity faced a different but similar challenge in substance.

Thousands of contracts, judgments and internal memos sat in hard-to-navigate archives; finding a specific clause meant wasting precious hours.

By digitizing the entire archive, segmenting it and transforming it into embeddings stored in a vector database, it was possible to query the legal heritage with a simple natural language.

The integrated GPT model made searches that previously took hours instantaneous, improving the quality of work and the speed in preparing contract drafts.

Knowledge accumulated over decades has finally become accessible, transforming itself into an operational and competitive asset.

In a large private hospital, complexity was hidden in fragmented clinical protocols.

Doctors were wasting precious minutes searching for updated procedures, at the risk of relying on outdated versions.

Here too, the RAG pipeline made the difference.

All documents have been centralized, cleaned and converted to embeddings.

Thanks to Qdrant and semantic search, an internal GPT model today provides contextual and updated answers in real time, directly during operational shifts.

This has reduced errors, increased compliance with protocols and significantly improved the quality of patient service.

Three different cases, a single winning logic: the value does not arise from the model, but from the flow that precedes it.

When corporate knowledge is prepared, organized and made questionable, artificial intelligence stops being an experiment and becomes a concrete lever of efficiency, speed and precision.

The RAG pipeline is not a technical detail, but the supporting structure that transforms dormant data into actionable decisions and real competitive advantage, shortening the distance between question and answer, between information and action.

This is the principle that these examples clearly demonstrate: the generative model alone is not enough, it is the pipeline that makes it truly useful and strategic.

Common mistakes to avoid when building your pipeline

Every entrepreneur entering the world of AI must know the difference between a profitable investment and a disastrous one lies in few errors key.

And most of these mistakes have nothing to do with technology, but with mentality:

- The first mistake is the rush: Many companies want to go directly from “we have an idea” to “we have an AI that knows our company”. They skip data preparation, thinking they can “fix it later.” In reality, then, they never will. They end up with fragile systems, inconsistent results, loss of trust and wasted investments.

- The second mistake is starting from the model: it is the most inviting shortcut, but also the most expensive trap. Without a solid flow, the model it becomes a black hole of time and resources; you end up investing in technology without building strategic foundations, and the project stalls.

- The third mistake is ignore data quality and freshness: many believe that “AI will take care of it”. Instead, AI amplifies what it receives: if the data is incomplete, redundant or obsolete, the answers will be wrong, even if convincing. An outdated pipeline becomes a brake, not an advantage.

- The fourth mistake is do not define clear objectives: Without knowing exactly what problem you are solving – customer support, internal automation, error reduction, increased sales – you cannot measure the effectiveness of your pipeline or make coherent strategic decisions.

- The fifth mistake is Don't measure results: without precise metrics (time saved, customer satisfaction, conversion rate, error reduction) you can't know if the pipeline is generating real ROI. And what cannot be measured cannot be improved or defended at a strategic level.

- The sixth mistake is treat the pipeline as a delegable technical detail: those who ignore it do not build an asset, but buy an instrument that they do not control. The pipeline is the strategic backbone, not a “piece” to be outsourced without vision.

- The seventh mistake is rely completely on external suppliers: If you don't control your pipeline, you don't own your competitive advantage: you're renting it. And in a competitive environment, those who rent their strategic infrastructure are left behind.

- The eighth mistake is separate technology from business: entrusting the construction to those who understand the technology but do not know your sector leads to pipelines that are perfect from a technical point of view, but useless in practice. AI is always contextual: a bridge between technical knowledge and strategic knowledge is needed.

- The ninth mistake is neglecting security and compliance: If the pipeline handles sensitive data, customer information, financial data, intellectual property, it must be built with rigorous processes. It's not optional: it's what determines whether you can climb with peace of mind or if you risk compromising your confidence of your customers.

- The tenth mistake is believe that AI brings value on its own: He never will. It is consistent flow that turns a model into profit. Those who understand this point build a real competitive advantage, on the contrary, those who don't understand it end up financing experiments that don't bring results.

Building a RAG pipeline does not mean "being technological", it means building a strategic machine that transforms corporate knowledge into operational power.

Those who understand this stop chasing trends and begins to create margins and in a fast-moving market, whoever controls the flow controls the competitive advantage.

The others simply fall behind.

Let's go back to where we started: you are an entrepreneur.

You're used to thinking in terms of investing and back.

A well-built RAG pipeline is an investment: it costs time and money, but the return is measurable and, if done correctly, significant.

It is not a universal solution, it will not automatically solve all your business problems.

It's a tool, smart and powerful, but still a tool.

The real question is not "should I build a RAG pipeline?", but "What specific problem in my business could be most effectively solved with a RAG pipeline, and what would be the measured return on investment?"

In Programming course with AI you learn to answer this question clearly, transforming technology from an uncertain cost into a measurable strategic lever.

If you can answer this question clearly, then you have a concrete reason to build it.

If you can't, then you're chasing technology for the sake of chasing it, and that path always ends the same way: money wasted and lessons learned.

Your business deserves better.

You deserve better.

Make the right decision, done with eyes open, with clarity of purpose, and with an obsession with measurable results.

That's the only way technology creates real value.