There is a precise moment in the life of a software architect when the concept of "server" stops being a resource and starts to become a limit.

It happens when you realize that your team spends more time configuring, patching, and scaling virtual machines than writing business logic that generates value.

It's that subtle but persistent feeling that infrastructure is becoming an anchor rather than an accelerator.

Azure Func (or Azure Functions) comes into play exactly here.

But be careful: it's not the magic wand many cloud vendors want you to believe.

If used poorly, it transforms a tidy monolith into an impossible-to-trace distributed “nanoservice,” where latency explodes and costs become unpredictable.

The transition to serverless is not just a change in technology, it is a mental paradigm shift.

It means to stop thinking in terms of "machines waiting for requests" and start thinking in terms of "events that trigger reactions".

However, there is a huge gap between writing a “Hello World” function that runs locally and designing a resilient, secure, and scalable serverless system in production.

In this article we won't just read the documentation.

We will dissect the architecture behind Azure Func, analyzing the real costs, hidden risks such as Cold Start and the governance strategies that distinguish a junior who "tests" the cloud from an Architect who dominates it.

If you want to build systems that scale infinitely without blowing your company budget, you need to understand what really happens behind the scenes of serverless.

What is Azure Func: the fundamental building block of serverless

Many developers define Azure Func simply as "code that runs serverless."

This definition is technically correct, but architecturally poor.

From a Software Architect's perspective, Azure Func is the dynamic glue of the cloud.

It is a Function-as-a-Service (FaaS) service that allows you to execute small pieces of logic in response to events, completely abstracting the underlying infrastructure.

You don't have to worry about the operating system, runtime or patching: Microsoft takes care of the hosting, you just take care of the code.

However, considering it just a way to "run scripts" is an understatement.

Azure Func is designed to scale horizontally almost instantly.

If a request comes in, the cloud provisions an instance. If ten million arrive, the cloud provisions (theoretically) the resources necessary to manage them in parallel, and then destroys them as soon as the job is finished.

This “compute on-demand” model fundamentally changes the economics of software.

You don't pay for reserved capacity (as in a VM that idles at night), but you pay for actual execution: duration and memory used.

But this is where many fall down: without proper governance, fragmenting the logic into hundreds of small functions can create a "distributed monolith" that is difficult to maintain.

Understanding what Azure Func really is means understanding that it is not the solution to everything, but a precision tool for managing unpredictable loads and asynchronous workflows.

Event-driven architecture: why combine functions with the monolith

The monolith has one big advantage: it is simple. Everything is there, in memory, a function call away.

But it has a fatal flaw: it scales all at once or not at all.

If the PDF generation module consumes all the CPU, it also slows down the login module, blocking all users.

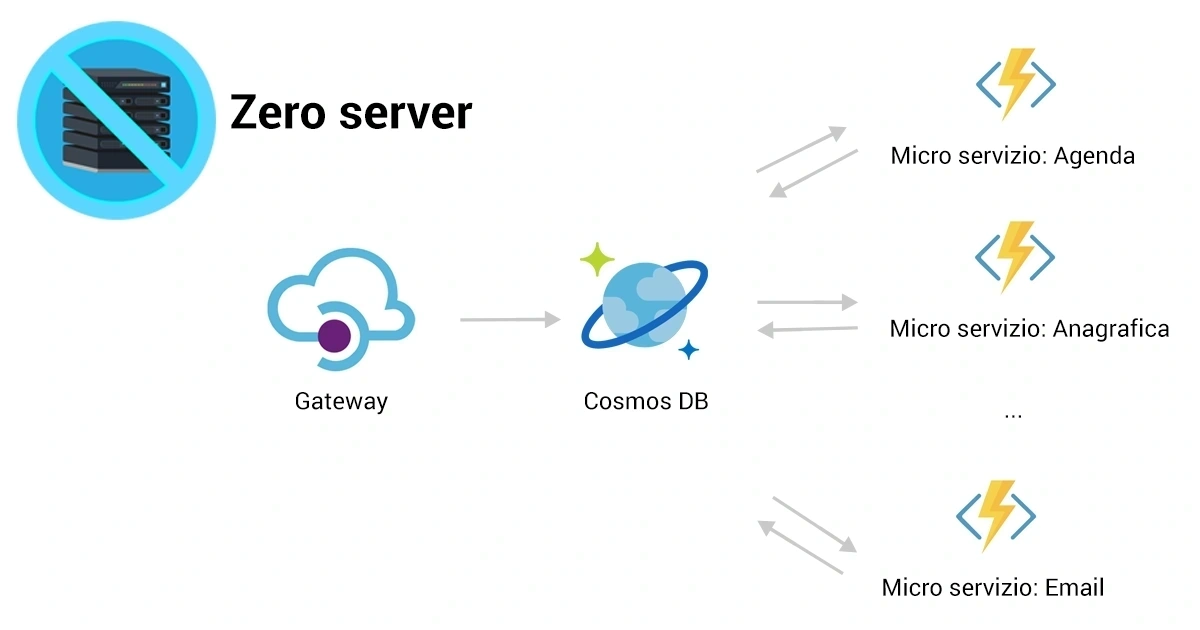

The architecture based on Azure Func pushes towards a model Event-Driven.

In this scenario, the components do not "call" each other directly (tight coupling), but emit events.

A file uploaded to a Storage Account is an event. A message in a Service Bus queue is an event. A modified record in Cosmos DB is an event.

Your functions react to these events in isolation.

This brings three enormous strategic advantages for an Architect:

- Granular Scalability: if the PDF generation process is under load, Azure will scale only the functions dedicated to that task, leaving the rest of the system intact and responsive.

- Resilience: if one function fails, it doesn't drag the entire system down. The message can return to the queue to be reprocessed (retry policy) or moved to a Dead Letter Queue.

- Development speed: Different teams can work on different features in different languages (C#, Python, Node.js) without stepping on each other's toes, as long as the event contract is respected.

Abandoning the monolith requires courage, but above all it requires competence in managing distributed complexity.

Many developers jump into serverless without understanding messaging patterns, ending up creating fragile systems.

In Software Architect Course, we not only teach the syntax, but how to design the data flow so that the system is robust even when services fail.

Operational management: move around the Azure Portal without losing control

Portal Azure is powerful, but it can become a labyrinth if you don't have a clear organization strategy.

When you start with just one Azure Func, everything seems easy. You click "Create", write code in the browser (never do this in production!) and see the result.

But when you have 50 functions, 3 environments (Dev, Stage, Prod) and connected resources like Storage, Key Vault and Application Insights, chaos takes over.

Operational management requires discipline.

The first step is the rigorous use of Resource Groups to logically group resources that share the lifecycle.

The second is the adoption of Infrastructure as Code (IaC) via Bicep or Terraform.

An Architect does not configure the functions manually in the portal: he defines the infrastructure as code, making it replicable and auditable.

In the Azure Portal, you need to learn to monitor not only the code, but the "health" of the Function App:

- Check your consumption plan quotas and limits.

- Manage access keys (Host Keys) and rotate them periodically.

- Correctly configure application settings (Environment Variables) without exposing secrets in the clear.

Moving through the portal without losing control means knowing exactly where to look when things don't work, ignoring the background noise of a thousand unnecessary options.

Triggers and Bindings: how to connect services while reducing code

This is the real "killer feature" of Azure Func compared to other competitors or custom solutions.

In a traditional application, if you want to read a message from a queue, you have to: instantiate the client, handle authentication, open the connection, poll, handle network exceptions, deserialize the message, and finally close the connection.

It's all "boilerplate" code: boring, repetitive, and error-prone.

Azure Func solves this problem with Triggers and Bindings.

The Triggers it is what starts the function (e.g. "a new file has arrived in the Blob Storage").

The Bindings it is the declarative way of connecting input or output data.

Imagine you want to save the result of a processing on a Cosmos DB database.

Instead of writing 50 lines of code to connect to the DB, simply add an Output Binding attribute to your function.

The Azure runtime takes care of managing the connection and writing.

This drastically reduces the technical debt linked to the infrastructure code.

Your code focuses only on pure business logic.

However, an Architect needs to know the limitations: bindings are great for simple operations, but for complex scenarios (distributed transactions, advanced queries) you may need to use native SDK clients to have more granular control.

Knowing when to use the magic of bindings and when to "get your hands dirty" with the SDK is what distinguishes a professional.

Microsoft Azure Pricing: Understanding Consumption, Premium, and Dedicated Models

Talking about cloud without talking about costs is irresponsible.

When you search for information about "Microsoft Azure pricing," you often end up in confusing tables. For Functions, the choice of plan determines not only the cost, but the architectural capabilities.

There are three main models, and choosing the wrong one can cost you dearly (in money or performance).

1. Consumption Plan (Pay-per-use)

It's true serverless. You only pay when the feature runs.

Advantages: Scale to zero. No cost if no one uses it. Massive autoscaling.

Disadvantages: Cold Start (more on that shortly). No VNET integration (you can't access resources in a private network easily). Maximum timeout of 10 minutes.

2. Premium Plan

It's "luxury" serverless.

Advantages: No Cold Start (pre-warmed instances). Complete VNET integration. More powerful hardware. Unlimited duration (theoretically).

Disadvantages: Fixed monthly minimum cost (you pay for at least one instance that is always on), which can be significant for small projects.

3. Dedicated (App Service Plan)

Run functions on dedicated virtual machines (like a classic website).

Advantages: Predictable cost (flat rate). You can reuse resources you are already paying for other services.

Disadvantages: There isn't the fast elastic scalability of serverless. If resources run out, functions slow down.

An Architect must know how to do the math: if you have a constant and predictable load 24/7, the Dedicated plan could cost less than the Consumption.

If you have sudden spikes and long periods of inactivity, Consumption is unbeatable.

Don't let your choice of top be a last-minute detail—it's a critical design decision.

How to take advantage of the free Azure tier to prototype at no cost

Many are unaware that Microsoft offers a generous level to get started.

Looking for "free Azure" isn't for stingy people, it's for strategists. The Consumption plan includes monthly 1 million free requests and 400,000 GB-seconds of resource consumption.

This means that for prototypes, personal development environments, or moderately loaded microservices, the compute infrastructure is literally zero cost.

How to make the most of it for prototyping?

- Architectural experimentation: You can create different architectures (e.g. one based on queues, one on HTTP) and test them without having to ask management for budget.

- Sandbox environments: Each developer can have their own isolated Azure Func instance to test features before merging.

- Internal tools: Team automations (e.g. Slack bots, automatic resource cleanup) often fall well into the free tier.

Be careful though: "free" applies to computation. The Storage Account necessary to run the functions, network traffic (Egress) and accessory services such as Application Insights are paid separately (even if we are talking about negligible amounts for small volumes).

Taking advantage of the free tier allows you to fail fast and learn without financial risk.

The Cold Start Problem: Advanced Strategies to Ensure Performance

The "Cold Start" is the number one enemy of serverless architectures in the Consumption plan.

When your function is not used for a while (about 20 minutes), Azure "shuts down" the underlying infrastructure to save resources.

On the next request, the cloud must: allocate a server, download your app package, start the function runtime, and run your code.

This process can take anywhere from a few seconds (for C# or Python) up to 10-20 seconds (for Java or heavy frameworks).

For a nightly batch process, it doesn't matter. For an API serving the UI, 5 seconds of waiting is unacceptable.

What are the advanced strategies to manage it?

- Code optimization: Reduce dependencies. Loading huge libraries at startup slows everything down. Use "Dependency Injection" intelligently (e.g. Singleton for DB connections) to reuse connections in subsequent calls (Warm Start).

- Premium Plan: If latency is critical, pay for the Premium plan. Keeps "pre-warmed" instances ready to fire immediately. It is the expensive but definitive solution.

- "Keep-Alive" Strategies (Not recommended but used): Create a timer function that calls your API every 5 minutes to keep it awake. It partially works, but it doesn't guarantee scalability across multiple instances and fights against the very nature of the platformer.

An Architect does not ignore the Cold Start: he analyzes it, decides if it is tolerable for the specific use case and chooses the mitigation strategy appropriate to the budget.

Durable Functions: Manage complex, stateful workflows

Serverless functions are, by definition, "stateless". They perform a task and forget everything.

But the real world is made up of complex processes: "Order product" -> "Wait for payment" -> "If paid, ship" -> "Otherwise cancel".

Managing this with standard functions requires an external database and complex coordination logic.

This is where the extension comes into play Durable Functions.

It allows you to write "Orchestrators" in the code.

An Orchestrator is a function that defines the workflow. He can call other functions, wait for their results, sleep for days, and pick up right where he left off.

The magic? The status is automatically saved in the Storage Account.

This enables powerful patterns like:

- Function Chaining: Execute A, pass the result to B, then to C.

- Fan-out/Fan-in: Run 1000 functions in parallel and wait for ALL of them to finish to aggregate the result.

- Human Interaction: Send an approval email and wait (even weeks) for the manager to click a link before continuing the process.

Durable Functions transforms Azure Func from a simple script executor to an enterprise workflow engine.

In Software Architect Course Let's dive into how to use these patterns to replace complex, expensive legacy systems with clean, maintainable code.

Security and monitoring: Secure APIs and use Application Insights

Launching a public function without protection is like leaving your front door open in the city centre.

Security in Azure Func is managed at multiple levels.

At a basic level, there are the Function Keys (secret keys in the URL), but for serious architecture they are not enough.

The correct approach is to integrate with Azure Active Directory (Sign In ID) for authentication, or use of Azure API Management as a front shield to manage rate limiting, IP filtering and advanced security policies.

Also, never save connection strings in your code. Always use Azure Key Vault and reference secrets via app settings.

And then there's the monitoring.

In a distributed system, you can't "debug" by looking at the console. The logs are spread across dozens of ephemeral instances.

Application Insights it is mandatory, not optional.

It gives you a real-time dependency map: see which function called which database, how long it took, and where the error occurred.

Without Application Insights, you're blind. With it, you have X-rays on your system.

An Architect sets up proactive alerts: you want to know if exceptions exceed 1% before that customers start calling support.

When NOT to use Azure Func: architectural limitations and trade-off analysis

Intellectual honesty is the main skill of an Architect. Azure Func isn't for everything.

There are scenarios where using them is a costly mistake or technically incorrect.

- Long-lasting processes (long running)

In the Consumption plan, you have a hard limit of 10 minutes. If you need to process a video for 2 hours, use Azure Batch or Container Instances, not a Function. - Constant and predictable load at high volume

If you have an API that consistently receives 10,000 requests per second 24/7, serverless could cost you much more than a well-sized Kubernetes (AKS) cluster or App Services. Serverless is paid "as you go", and if consumption is very high, the price goes up. - Extreme control of the environment

If your application requires the installation of custom fonts, legacy GDI drivers, or particular operating system configurations, the PaaS/FaaS model will block you. In that case, Containers are the best choice. - Constant ultra-low latency

For high-frequency trading or real-time gaming applications, HTTP overhead and potential cold starts make Functions unsuitable.

Trade-off analysis is what we do every day. Know when not using a technology is as important as knowing how to use it.

The role of the Software Architect in hybrid cloud governance

The cloud doesn't manage itself. Azure Func is a powerful tool, but without an overview it leads to fragmentation.

The role of the Software Architect is no longer to draw UML diagrams on paper.

Today the Architect is the guarantor of governance.

It must define policies to prevent costs from exploding. It must design networks (VNETs) to ensure that sensitive data never travels over the public internet. It must choose integration patterns to merge the old on-premise world with the new cloud world.

Azure Func often acts as a bridge in hybrid cloud scenarios, connecting legacy enterprise ERPs with modern SaaS services.

Technology is easy. People and processes are difficult.

The real challenge is not writing the function, but orchestrating the team, managing the DevOps life cycle, ensuring security and keeping the system evolvable over time.

Online tutorials teach you the “how to.” Competitors simply sell you theoretical certifications.

But no one teaches you to think and decide in complex and real scenarios where the budget is limited and the risks are high.

permanent="Courses/ArchitettoSoftwareAi"

We transform senior developers into technical leaders capable of guiding the company in technological choices, using tools like Azure Func not for fashion, but to create real competitive advantage.

Don't just make code work. Learn to govern the system.

The next step for your career starts here.