You have earned compatibility, but not peace of mind: running the code is easy, making it really breathe is another story, and as long as something creaks, it will never be over.

Many think that updating the technology is sufficient to achieve efficiency, but without targeted intervention the code brought into the new environment remains inefficient.

Each non-optimized piece of code continues to use resources without producing value, with effects that accumulate over time and reduce the fluidity of the entire system.

Efficiency does not arise on its own: it must be built by carefully analyzing the points where memory, execution time or computational capacity are wasted.

Poor internal management manifests itself in small slowdowns that add up, turning the app into a fragile and struggling system when the load increases.

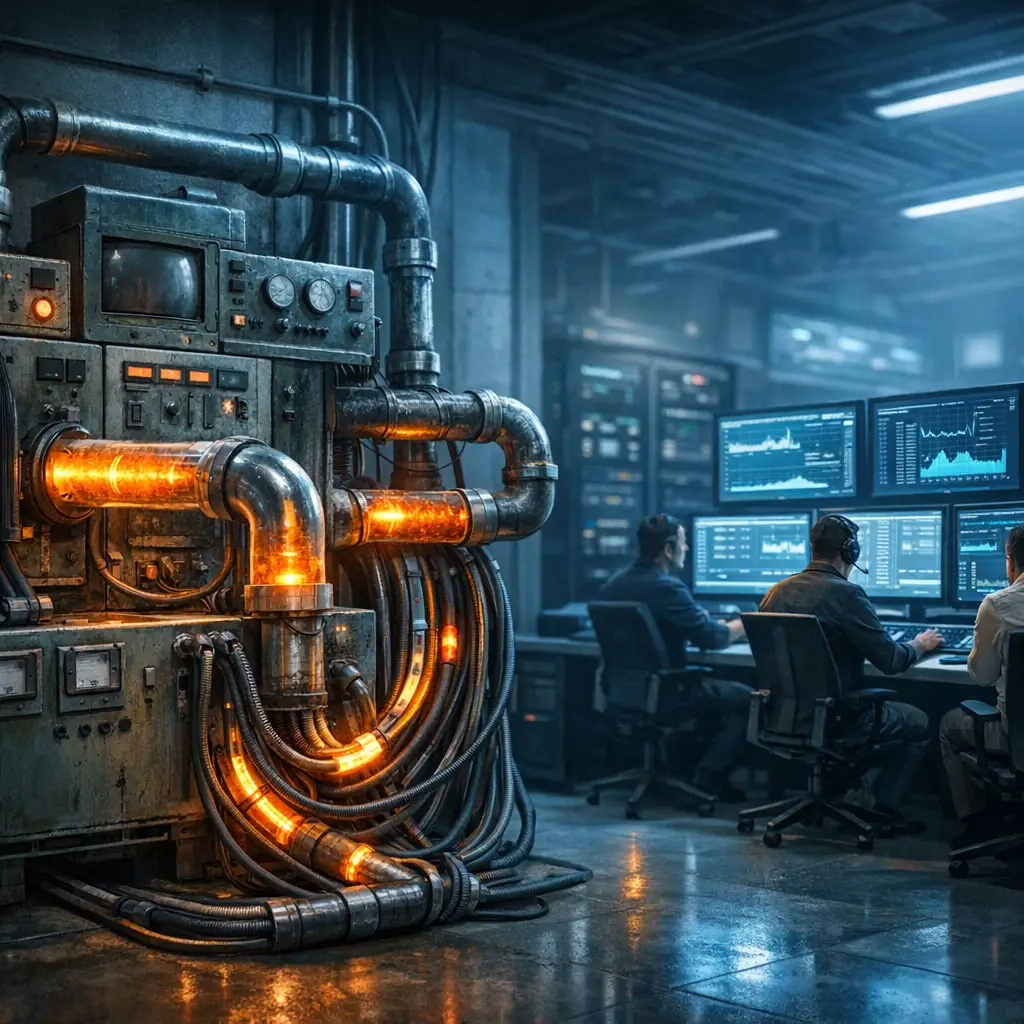

The first step is to observe how the application really behaves under load, measuring flows and discovering areas where resources are blocked for no reason.

Only after understanding how and where consumption accumulates is it possible to act precisely, reviewing what slows down the system without touching what works well.

The goal is to simplify without breaking, streamlining without losing functionality, intervening only where there is room to lighten the infrastructure.

A common mistake is to keep active objects or processes that are no longer needed, contributing to an uncontrolled growth in memory use.

Every forgotten component, every connection left open or event never removed is a technical debt that grows silently and makes the application less stable.

With the addition of new features, these inefficiencies are amplified, leading to bottlenecks that limit scalability and compromise quality.

While .NET offers automatic tools to manage memory and resources, it cannot replace the design discipline necessary to avoid the most insidious wastes.

Write self-regulating code it means not releasing what is not needed, close everything that opens and monitor every operation that affects performance.

Every technical choice must be accompanied by the awareness that even the smallest optimizations contribute to the general stability of the app over time.

True efficiency is not a question of immediate speed, but of sustainability: a code that does not degrade, that adapts, that does not collapse under pressure.

Every wasted resource is a small hidden failure, one of those that no one tells you but which, sooner or later, your user perceives.

A well-designed app can handle growth, absorb load and continues to offer consistent performance without having to be rewritten at each evolutionary step.

The difference lies in attention: those who optimize build lasting value, those who neglect details build problems that will explode at the worst moment.

Every internal behavior has a cost, every unmanaged process is a risk, every missed opportunity to improve is a loss that pays in the long run.

For this reason, every optimization intervention should arise from a clear question: is what has been written really the best that could be achieved today?

And if the answer is no, then it's the right time to intervene, methodically, with attention and with the will to build something that will last over time.

If after the migration you feel that something is still missing, the VB6 Migration course can help you close the loop with a mature approach to optimization.

Optimizing after migrating from VB6: the step that makes the difference

No application truly scales on a database that lives in the past, e.g every wrong query is a brake that you yourself left pulled.

A compatible app is just the starting point; what distinguishes it is the ability to evolve without becoming burdened.

It's natural to want to close the project as soon as everything seems to be working, but that moment only marks the end of the conversion, not the beginning of the evolution.

Many stop at the first breath, convinced that breathing is enough to be alive.

But a migration that stops at compatibility it's just well-disguised survival.

The migrated code often maintains logic created in another time, with slow structures, ineffective access to the database and rigid or unintuitive interfaces.

Moving an application without rethinking it is like moving house and taking all the old problems with you: nothing breaks, but nothing really improves.

Optimizing doesn't mean rewriting everything, but take back what has been adapted and make it work best in the new ecosystem.

The improvement not only affects performance but also readability, ease of maintenance and long-term sustainability of the code.

Reduce the use of resources, shorten response times and lightening the team's cognitive load are concrete and strategic benefits.

Many post-migration slowdowns arise from the fact that the code still behaves as if it were in a linear, synchronous, and undemanding context.

Habits such as uncontrolled loops, heavy functions, or omnivorous classes are common legacies that limit the potential of the new .NET environment.

The platform offers powerful tools, but they do not activate themselves: they must be known, understood and integrated into a new way of writing software.

The real leap is not technical but cultural: abandoning the VB6 approach and embracing a paradigm where each function has a clear task and a defined responsibility.

Investing in optimization is a choice of maturity, because improve what already exists and prepares the application to last over time without degrading.

A migration can only be considered truly completed when the app is not only compatible, but also solid, fast and ready to welcome the future.

It is not enough that the code works: it must do so with coherence, efficiency and with that clarity that transforms simple functioning into real value.

The point is not what you saved, but how much better are you willing to make it than before.

Because if you don't do it, no one will.

Data is the soul of the app and if you treat it like a landfill, don't expect anyone to want to live in it.

Often it's not the bug, but the habit that slows you down. The VB6 Migration course explore precisely those gray areas that slow down without being noticed.

If you suspect right now that your app isn't performing at its best, stop: every day you ignore inefficiencies is a day someone else writes better code than you.

And if you've made it this far and something inside you has moved, don't ignore it, because it's a sign that you're starting to look at code with new eyes.

And this, now, is your chance to get serious.

Those gray areas that slow everything down: learn to identify them and correct them

Each application retains invisible shortcuts over time, automatisms no longer seen and behaviors which, although working, today represent a brake on fluidity.

During migration, many of these logics are transferred without analysis, creating a gray area where technical inertia disguises itself as apparent stability.

The code brings with it invisible flaws that make no noise, but kill performance one click at a time.

The real bottlenecks are almost never obvious crashes, but progressive slowdowns caused by inefficient functions or routines left to run uncontrolled.

Often the problem is a function that is invoked too often, a module that loads more data than necessary, or a synchronous sequence that blocks the interaction.

In modern environments, what used to be tolerable today it is a limit: the user expects instant responses, responsive interfaces and loads managed in real time.

Even a few seconds of waiting can influence the perception of quality, compromising the user experience and generating growing frustration.

To intervene, you need a method: sensing that something is slow is not enough, you need to have reliable tools that measure the real behavior of the system.

Profiler and tracking tools they help to discover where the slowdowns are concentrated, how much memory is used and which functions consume more than expected.

The measurement is only the starting point because, after the diagnosis, it is necessary to understand whether the problem arises from the code, from the structure or from an outdated design choice.

It's not about pointing fingers, but about reading the context, analyzing the flows and building a response that isn't limited to the surface but gets into the cause.

A bottleneck solved returns strength to the application, reduces user fatigue and demonstrates attention to detail that distinguishes refined software.

Every millisecond recovered is not just a technical advantage, but a gesture of respect towards those who use the system every day and expect it to respond promptly.

The real problem is not the delay, but the habit of accepting it as inevitable: every optimization is a way to break this inertia.

Optimizing means reacting with clarity and method, choosing to improve what slows you down even if it works, because quality is never a coincidence.

Often it's not the bug, but the habit that slows you down.

The VB6 Migration course explore precisely those gray areas that slow down without being noticed.

Do you know what the problem is?

The real problem is not the slowdowns, but the idea that they are normal: they are not and must no longer be.

From the rigidity of VB6 to the lightness of .NET: the rewriting that makes the difference

Code ported to .NET after a migration may work, but often does so in a rigid manner, with inherited solutions that they do not fully exploit their potential current.

Optimizing does not mean making the code more beautiful, but more effective, through specific interventions that improve stability, clarity and overall performance.

The first step consists ineliminate redundancies: repeated cycles, superfluous controls and overly complex structures slow down execution and make everything less comprehensible.

Here are the most common signs that logic is slowing down your system:

- nested loops that process more data than necessary

- duplicate checks which repeat validations already performed

- classes that play too many roles simultaneously

- methods that do more than they say

- repeated calls to non-optimized functions

Optimization requires discipline, not intuition: it is a process made of conscious decisions that reduce risks and make the code easier to maintain over time.

A key element is precisely separate roles and responsibility, because each monolithic block makes it difficult to test, evolve and resolve any malfunctions.

In modern environments, writing code means building with lightweight, well-defined, easily replaceable modules that can work together without interference.

The technologies available today allow us to write asynchronous logic, exploit resources with greater precision and respond better to user requests.

Every architectural choice must aim to reduce the load, avoiding unnecessarily heavy constructions or generic solutions that are poorly suited to the specific context.

The use of the right tools is not enough if there is a lack of awareness of why they are being used: the technique must always be accompanied by an understanding of the problem.

Optimizing does not mean rewriting everything from scratch, but valorise what works and correct only what hinders the efficiency and sustainability of the system.

Anyone who intervenes without criteria risks worsening the situation, which is why speed must leave room for precision and reading of the real context.

Stable code is what evolves without breaking, which adapts without collapsing, which lives without depending on whoever wrote it.

Code that works is not synonymous with sustainable code.

The VB6 Migration course helps you recognize what weighs you down, even when it doesn't seem like it.

Returning to what has already been written with a new look allows us to identify alternatives that are more linear, cleaner and more consistent with current objectives.

The true strength of optimization lies in the ability to question what has always been done, choosing more essential solutions that are less prone to failure.

Caching in .NET: the edge for applications migrated from VB6

Caching is one of the most effective strategies for improving performance, but in migrated projects it is often ignored or underestimated, even when it would be crucial.

In legacy environments, real-time computation was the norm, but in .NET, keeping recompiling everything every time means wasting resources that could be saved.

Implement a cache requires attention to detail: it must be decided what to save, for how long and according to which rules the stored content is invalidated.

The advantage of the cache is evident in cases where the data rarely changes, because it allows you to avoid repeated readings from slow or expensive sources such as databases.

Whether for distributed desktop or web applications, well-used temporary storage reduces response times and lightens the load general of the system.

The guiding principle remains the same: do not perform an operation multiple times if the result can be reused without losing consistency or reliability.

Every avoidable call is a gesture of respect towards those waiting.

And every useless wait is a broken promise, a signal that something could have been done better but it wasn't.

The cache can contain not only data, but also results of intensive calculations, static configurations, or responses to time-consuming queries.

For it to really work, though, it has to be monitored and managed with care, to prevent it from becoming a source of errors or inconsistencies that are difficult to detect.

An effective cache is invisible to the user but vital to the system: it speeds up, streamlines and protects, without ever hindering the correctness of the data presented.

When the cache is tailor-made, the system it becomes smoother without increasing complexity, faster without becoming fragile or difficult to maintain.

Not everything needs to be saved and not everything needs to be recreated with every request: the balance lies in understanding what is worth memorizing and how long to keep it for.

True optimization arises when the computational load can be reduced without compromising the timeliness of the information or the scalability of the app.

Caching, when used wisely, is a powerful tool for saving resources and improving user experience, without sacrificing control or accuracy.

Caching is not a gimmick; it's a way of saying: in here no one wastes time, nor code, nor life.

If you repeat the same calls every day as if time has no value, stop: every millisecond wasted today is a fewer users tomorrow, and you are not here to lose.

It's not just a matter of speeding up, but of saving energy.

The VB6 Migration course it guides you to use cache as a balancing lever, not a shortcut.

It's not just about writing better code, but about writing code that represents you, that says: "I put my head here. And my face too."

When you find a better way to achieve the same result, It takes courage to change, because every clearer choice is also a stronger choice.

From monolithic to modern: Using multithreading to bring VB6 code to life

The transition from VB6 to .NET requires a change of mentality in time management, because today fluidity is not a luxury, but a fundamental requirement for every application.

In VB6 the user waited, but in .NET time is a resource to be treated with respect, because every second of waiting is a missed opportunity to shine.

In the legacy approach, each operation blocked the next, freezing the interface and accustoming the user to waits that would be unacceptable today.

A modern migration must address this limitation and transform the code in a system capable of reacting without interruptions, even under increasing load.

.NET provides tools to distribute tasks in a non-blocking way, but knowing how to use them requires technical knowledge and clarity on responsibilities.

Writing async isn't enough if you don't understand that a new logic is being introduced, where time is managed with greater intelligence and control.

Letting the application continue to respond while it waits for a result is what separates a smooth experience from a slow and frustrating one.

If every operation remains synchronous even after the migration, you are missing out on one of the most important opportunities to improve overall efficiency.

Access to external resources such as databases, APIs or file systems should be always handled asynchronously to avoid bottlenecks and unwanted blocks.

But using multithreading does not mean launching everything in parallel without criteria, because coordination is needed to keep the application stable.

Each background activity must be managed carefully to prevent it from becoming a source of excessive memory consumption or behavior that is difficult to track.

The real work is knowing how to distinguish which parts of the code can be executed in parallel and which instead require sequence and rigor.

Not all logic are suitable for concurrent execution, and forcing parallelization can generate more problems than it solves.

Optimizing execution time does not simply mean speeding up, but building a flow in which each operation occurs when needed and as needed.

When an application knows how to use time to his advantage, every interaction becomes more natural, every loading faster and every wait more acceptable.

The difference can also be seen in the team: well-managed code at a temporal level reduces anxiety, simplifies debugging and makes the internal logic clearer.

A fluid app is born from code that knows how to wait where it needs to, run where it can and adapt to the context with the right amount of technical discipline.

Whoever masters time wins; whoever suffers it slows everyone down: today you can no longer afford to be the one slowing down.

Your application can no longer live on breath: it needs oxygen, and you have to give him that breath.

If your app is still struggling to breathe, perhaps it's time to redistribute the load.

The VB6 Migration course tackle multithreading with the calm needed to avoid making mistakes.

Tools to monitor what happens after migrating from VB6 to .NET

You cannot improve what you do not measure precisely, and this is precisely the rule that makes monitoring the foundation of any optimization path.

Many times what appears slow is not, and vice versa: only data can confirm or deny a sensation, avoiding unnecessary interventions or wrong directions.

The most serious slowdowns are not always visible to the naked eye, and to identify them you need tools capable of analyzing what the code does not directly show.

Monitoring is not a one-off action, but a continuous cycle that alternates careful observation, accurate measurement and review based on concrete facts.

Performance analysis tools help you find out the real sources of inefficiency, revealing internal dynamics that would otherwise remain invisible.

Among the most useful tools for this task are:

Each tool has its own characteristics, but they all allow you to identify critical areas, visualize the impact of slow calls and quantify real consumption.

Analyzing in depth you can know how long it takes each function, how much memory each object consumes and where the heaviest waits accumulate.

The code stops being an opaque set of instructions and turns into a readable map, where every hot spot can be cooled down with targeted action.

Today it is no longer sufficient to measure only during development: the production environment must also provide metrics to anticipate problems and correct deviations.

There are tools capable of collect real-time data, correlate events, record errors and anomalous behavior even before the user reports them.

The most common mistake is to intervene where it "seems" that something is slowing down, neglecting those peripheral functions that instead cause the worst bottlenecks.

The real problems often lurk in the most neglected modules, in processes that activate in the background or in logic that no one has seen again for years.

Optimization requires rigor and patience: you have to learn to read numbers before deciding where and how to act so as not to rely on approximate intuitions.

Once the correct information has been collected, the most important work begins: that which transforms the data into concrete choices and measurable improvements.

Refactoring, strategic caching, managed asynchrony and load reduction are actions that they must arise from an accurate diagnosis, not from assumptions.

Every reliable data is a form of technical truth that must be listened to without arbitrary interpretations, because only in this way can something solid be built.

Every piece of data is a judgment on what you've written, and ignoring it means accepting that your application is slowly losing credibility.

Measuring means understanding, and understanding allows you to evolve without uncertainty, with the serenity of those who act on what is real and not on what is imagined.

Measuring is more than a technical gesture: it is a responsibility.

The VB6 Migration course teaches you to read the hidden signals that often precede real problems.

You already know if your app is slowing down, but until you measure it you're just hoping no one notices, and that's no longer acceptable.

Invisible Efficiency: Strategies to Reduce Your Application Load

Assume that newer technology automatically fixes inefficient code it is one of the most widespread errors in post-migration from legacy environments.

Every piece of inherited and unrevised logic continues to negatively impact performance, increasing resource consumption even in the absence of visible problems.

The goal is not only to improve speed, but to guarantee stability, contain memory use and prevent progressive degradation that reduces the quality of the experience.

Excessive CPU, RAM, or I/O usage often doesn't result from bugs, but from outdated logic, unnecessarily active objects or cycles that do not release what was allocated.

They are silent wastes, which do not block the application but undermine its efficiency day after day, slowing everything down without raising any alarms.

Reducing the load requires a concrete study of usage patterns: understanding what really happens during execution is the basis for any conscious improvement.

Identifying the heaviest functions, checking actual memory usage and monitoring the useful life of objects helps find margins for optimization.

In particular, pay attention to:

- objects that remain in memory longer than necessary

- processes that activate multiple times for no reason

- connections not closed correctly

- events recorded but never de-recorded

- components loaded but not used

But collecting data is not enough: we need the ability to transform that information into targeted interventions, avoiding unnecessary or harmful changes.

The best optimizations come from thoughtful choices, not by drastic cuts, because what works well must be preserved and only what is burdensome must be reconsidered.

Among the most frequent errors is the retention in memory of objects that are no longer useful, connections left open or resources not correctly freed.

Each negligence accumulates, turning into a threat to overall stability, especially as the application grows in volume and complexity.

Although .NET has advanced tools to automatically manage resources, these they do not replace the responsibility of the programmer.

The release of resources must be explicit, systematic and coherent, because relying only on automation still exposes you to risks of degradation.

Managing what opens well, closing what is activated and not keeping in memory what is not needed are fundamental actions for building efficiency.

Reducing consumption is not only a good practice, but a concrete gesture of respect for those who use the app and for those who will have to maintain it in the years to come.

A truly modern application is one that maintains consistent performance, holds up under load and continues to respond even in complex scenarios.

Behind every efficient behavior there is a conscious technical decision, the result of attention to detail and long-term design vision.

Those who plan with clarity know that performance is not only measured in time, but in the app's ability to remain solid as it evolves.

Even what you can't see weighs on you.

The VB6 Migration course shows you how to eliminate the waste that's holding your app back.

If you feel like your application is consuming more than it should, even if it “works,” don't ignore it.

It is precisely there that the worst waste is hidden: in the code that no one controls anymore, in the processes that remain active, in the details that drain resources every day.

Reducing consumption isn't just a technical detail, it's the way you tell your team, your users and even yourself: "I know where I'm going, and I don't want to drag around unnecessary ballast."

And to do that, you have to have the courage to go back.

To look better.

To choose to lighten up before it's too late.

When the bottleneck is in the data: how to give your database a boost

When you migrate an application from VB6 to .NET, the database is often the least touched part, but also the one that can most limit the real potential of the new platform.

Many systems inherit light-load structures based on outdated models that cannot keep pace with modern scalability and responsiveness needs.

Moving unoptimized tables to a new context is equivalent to mount old components on a modern base, generating hidden but persistent slowdowns.

Optimizing means looking beyond the queries that return data and clearly addressing the deep structure on which the entire application is based.

Poorly designed tables, absent or excessive normalization, and poorly distributed data directly impact response times and operational fluidity.

The first check it should be about indices, which are often missing entirely, are too generic, or inconsistent with the most frequently used filters.

Evaluate carefully:

- the presence of indices on the fields actually questioned

- duplication of similar indexes that slow down updates

- the lack of indices on the most used foreign keys

- indexes on columns with low cardinality (often useless)

- the effect of the indices on frequent write operations

Even writing queries deserves attention, because logic created in VB6 tends to generate dynamic, fragile and difficult to optimize SQL commands.

The use of modern ORMs simplifies the code, but must be accompanied by knowledge of the real costs of any premature conversion of results.

Common mistakes like early calls to ToList() or filters placed too late lead to unnecessarily heavy queries that slow down the entire application.

In addition to queries, it must be considered connection managementi: they must be opened only when needed, closed correctly and exploited through pooling mechanisms.

Transactions that are too long or poorly designed can generate locks, slowdowns and anomalies that are difficult to detect, especially in concurrent environments.

Monitoring the database with proper tools helps intercept problems before they become critical, acting on slow queries, blocking or inefficient schedules.

Tools like SQL Profiler or execution plans offer a concrete view of how the database responds to requests, helping to make corrections where it is really needed.

There is no need to wait for problems to emerge in production: the signs of inefficiency can already be seen in the logs, execution times and peak usage.

Optimizing the database is not an ancillary activity, but an act of care towards the entire system, because data quality affects every single operation.

The data is not a simple archive to query, but the living identity of the application: if managed poorly, everything else suffers, even when the code is perfect.

If the code is the engine, the data is the traction.

The VB6 Migration course helps you make them work together, without friction.

Only by treating the database as a strategic component and not as a simple container can you obtain a truly solid app ready to last over time.

How a function migrated from VB6 rediscovered its potential in .NET

Think of a function inherited from VB6 that loads a customer's details every time they are selected, even if the operation is repeated identically several times in a row.

In the old environment it worked without problems, but in .NET that same logic shows its limits, blocking the interface for a few moments with each new selection.

This is not an actual error, but inefficient behavior that compromises the fluidity of the user experience as the data increases.

The code continues to reopen the connection, execute the query, process and display the data even when it is not needed and nothing has changed compared to the previous selection.

No mechanism avoids recalculation, no local memory prevents the repetition of identical operations, and the interaction loses responsiveness with each click.

Optimizing does not mean rewriting the entire function, but rethinking it so that it better responds to real requests without wasting resources or time.

The first step is measure how much it takes, which operations take the longest, and what is needlessly repeated in each execution of the same flow.

Then we introduce a local caching system to avoid duplicate queries when the selected customer has already been recently loaded.

Afterwards the database call is made asynchronous, to prevent it from blocking the interface while data is being retrieved and processed.

It makes the query easier, extracting only the columns that are really necessary, and checking that formatting and processing do not introduce avoidable slowdowns.

The result is not only a smoother interface, but also cleaner, more readable and easier to maintain code, free of unnecessary duplication and overhead.

The user perceives a more responsive system, as the team gains confidence in a feature that now performs consistently with modern expectations.

The team feels it, the user experiences it, and you finally stop being afraid every time a demo starts.

There was no need to change instruments, nor to alter the general architecture: just small targeted interventions born from observation and good design sense.

Optimization, ultimately, is this: not distorting the past, but making it worthy of the present, so that the software grows without losing its identity.

Each optimized function represents a concrete improvement for those who use the app and an act of technical care for those who maintain it and make it evolve over time.

When a code responds fluidly, without imposing itself, without stopping, it returns that feeling of harmony that only a job well done can convey.

If you feel that your code "works" but no longer represents you, it's time to go back and rewrite it out of respect: for the team, for you, for everything it can still become.

Optimizing a function is just the beginning.

It's when you understand that you can do the same with the entire application that the change of mentality really comes about.

A migration does not end when the application works, but when it begins to evolve, becoming lighter, more fluid, more consistent with what it can really be today.

Optimizing a function is like regulating a breath: small gesture, big effect.

The VB6 Migration course it leads you to do it methodically, on scale.

The code stops being a burdensome legacy only when it abandons the constraints of the past and is transformed into something that responds better, grows better, lives better.

Making an app efficient is not a trick for perfectionists, but a gesture of responsibility towards those who will use it, towards those who will maintain it and towards those who believed in it.

Every inefficient line that we leave behind is a failed promise, an unexpressed margin, a choice that someone else will have to pay for with time and effort.

Deciding to optimize means choosing not to look the other way, it means accepting that there is still value to be unlocked, still order to be returned.

Looking a migrated app in the face and choosing to improve it is an act of clarity and courage, because it means call into question what seemed already done.

It means taking up the code again with more maturity, with more vision and, above all, with the sincere desire to make it become what it could really be.

Every optimized block, every simplified flow, every freed resource is a gesture that improves the experience of those who will use that app long after you.

If you are still reading, it is because you want your work to leave a mark, not just a correct execution but a structure that lasts, which convinces, which inspires trust.

You don't need a new tool, but a new intention, a clear direction that reminds you why you chose not to just spin something.

You saved an app from its obsolescence, but now it's up to you to give it a new shape, a new rhythm, a new identity that is worthy of the context in which it lives.

Don't leave it at an intermediate stage made of compromises and references, take it to the end, without hesitation, because every choice made today builds tomorrow.

Do it with rigor, with awareness and with all the commitment that good code deserves, because this is what transforms a migrated project into a completed project.

Close the circle.

Stop migrating.

Start building.

And do it before it's too late.

Because what you just read is not theory.

It's the distance between an app that works... an app that makes a difference.