Generative AI for .NET teams that need real use cases, control, and economic value

This category explains how to bring LLMs, agents, and generative AI into .NET products and processes with technical discipline: less hype, more integration, more reliability, more measurable value.

LLMs are not chatbots: they are architectural components that change products

When a team discovers it can call GPT-4 from an ASP.NET controller in three lines of code, the first reaction is excitement.

The second, a few weeks later, is confusion: the model hallucinates, costs scale unpredictably, users do not understand what is happening, and the system is not testable.

The problem is not the model.

The problem is that an LLM is not a deterministic function: it is a probabilistic component with variable latency, costs proportional to the volume of text processed, and behavior dependent on the context provided.

Integrating it into a real product requires the same architectural decisions you make for any critical component: where is the boundary of responsibility, how do you handle failure, how do you monitor behavior in production.

In this category you find exactly that: not tutorials for calling an API, but reasoning on how to insert LLMs into real systems with Semantic Kernel, RAG, agents, and function calling, while maintaining control over costs, reliability, and output quality.

Semantic Kernel, agents, and pipelines: what to use and when

The .NET AI ecosystem has consolidated around Semantic Kernel as the primary orchestration framework.

It is not the only option, but it has the strongest Microsoft support, the best integration with Azure OpenAI, and the most active community in the .NET ecosystem.

When to use Semantic Kernel: when you need to compose multiple model calls, manage conversational memory, integrate plugins and tools, or build agents that reason over multiple steps. Semantic Kernel is overengineering for a single isolated call.

When to use the OpenAI SDK directly: when you want total control over the payload, have specific streaming or function calling requirements that Semantic Kernel does not expose cleanly, or are building a custom wrapper for your team.

When to build agents: when the problem requires the system to autonomously decide which tools to call, in what order, and based on what reasoning. Agents are powerful but fragile: they require rigorous prompt engineering, explicit fallbacks, and continuous monitoring.

When not to use an LLM: when the problem is deterministic, when latency is critical, when data cannot leave the infrastructure and a local model is not sufficient, or when the cost per query is not sustainable in the business model.

Costs, latency, and reliability: the three constraints that change everything

Anyone who builds a prototype with an LLM never has a cost problem.

Anyone who brings that prototype to production does.

Tokens cost money.

A RAG pipeline with retrieval, reranking, and generation can cost five to fifty times a simple call.

Multiplied by thousands of requests per day, that difference becomes a margin problem.

System design must account for it: shorter prompts, response caching, intelligent document chunking, choosing the right model for the task's complexity.

Latency is the second constraint.

A user interface that waits three seconds for an LLM response without visual feedback loses users.

Streaming the response solves the perception problem, but not the structural one: some pipelines simply cannot be made fast enough for certain use contexts.

Reliability is the third.

Models hallucinate.

Not always, not often, but enough to make an output validation system necessary when the result has an impact on critical decisions or data.

Automated evaluation, human feedback loops, and fallback to deterministic logic are not optional in production.

| Constraint | Mitigation strategy | .NET tool |

|---|---|---|

| Token cost | Prompt compression, caching, smaller model | Semantic Kernel, distributed cache |

| Latency | Streaming, parallelization, precompute | HttpClient streaming, Task.WhenAll |

| Reliability | Output validation, retry with different prompt | Semantic Kernel filters, Polly |

How to build an AI product that scales beyond the demo

The difference between a demo that impresses and a product that works in production is measured in months of work on aspects that no tutorial shows.

The first is observability: knowing what the user asked, what context was injected into the prompt, what the model responded, and how long it took.

Without this data you cannot improve the system or diagnose failures.

The second is testability: a system that calls an LLM is not testable with traditional unit tests, but it can be designed with replaceable interfaces, model mocks for functional tests, and automated output evaluation on reference datasets.

The third is governance: who can do what with the AI system, what data enters the context, how user consent is managed, what happens when the model produces inappropriate output.

These are not technical questions: they are product and compliance questions that the technical team must be able to raise before they become problems.

In this category the articles address exactly these aspects: not the magic of AI, but the engineering that makes it useful and sustainable.

Analyses, cases, and articles on LLMs, AI agents, and .NET integration patterns

34 articles foundTools, policies and governance to use artificial intelligence safely and productively

How to adopt AI in your development team in 2026: tools, policy, governance and ROI. Practical guide for CTOs and CEOs.

How to use vibe coding in corporate teams without losing control of code quality and security

Vibe Coding in the enterprise in 2026: policy, real risks and governance for CTOs. How to adopt it without compromising security and architecture.

AI Agents in .NET: why almost no one builds them right (and how to with Semantic Kernel)

Building AI agents in .NET is nothing like calling an API. Semantic Kernel, production patterns, security, and the mistakes to avoid.

The protocol that connects your .NET code to artificial intelligence

Build an MCP Server in .NET with the official Anthropic SDK. Practical guide with C# examples to connect your business data to AI in 2026.

MCP: the protocol changing how your tools talk to AI

What is the Model Context Protocol, why it is becoming the standard for agentic AI, and how to build an MCP server in C#/.NET with practical examples.

Copilot, Cursor or Claude Code: which AI coding tool is actually worth the money in 2026

Real comparison of GitHub Copilot, Cursor, and Claude Code in 2026. Which one to choose based on your tasks, budget, and daily work on .NET projects.

Vibe Coding: What Really Changes for Professional Software Developers

What is vibe coding, when it works, and when it fails. Practical guide with real examples for professional developers using AI in 2026.

Use hybrid search if you want to stop getting almost-right results

Signs you need hybrid search: unstable ranking, ignored tokens, imprecise results. Solutions across keyword search, embedding and re-ranking.

Software as an asset or a cost? How much money are you really losing every month?

Discover why treating software as an asset changes estimates and business risk. Practical criteria, indicators and choices to turn code into capital.

What is the best AI for programming without losing control

Discover the best AI for programming in 2026: when to use it in the IDE and when to use a chat. Real examples, free-tier limits, and method choices.

By introducing AI into SMEs, time, costs, errors and manual work in company software are reduced

AI in SMEs reduces hidden risks and costs, restoring consistency in business rules without blocking operations.

The problem of AI hallucinations, the invented answers and how to eliminate it

AI hallucinations generate false but plausible responses. With RAG and vector databases you can reduce them and make AI systems more reliable.

AI software development in the financial sector

How Artificial Intelligence is revolutionizing the financial sector, with concrete examples of real software to be developed in .NET

Visual Studio 2026 is the legal trick that makes you faster than everyone else

Explore what's new in Visual Studio 2026, with C# 14 and .NET 10 integration, to build modern and scalable applications.

How to use LLMs like Copilot in your .NET projects without becoming dependent

Learn how to integrate LLMs to boost productivity and code quality while keeping full architectural control over your .NET projects.

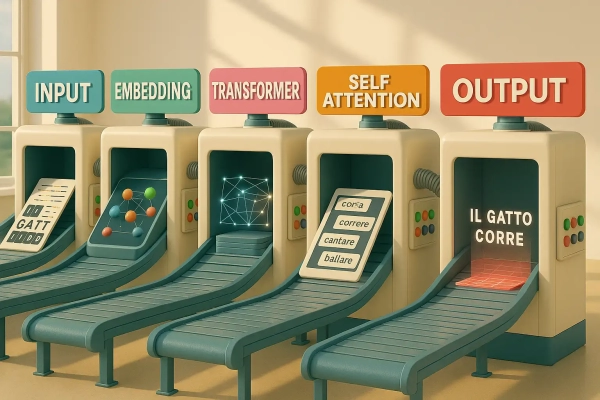

Language models explained simply: no magic, just numbers

How do language models work? They don't think, they compute. Discover the hidden tools that drive them and learn how to actually use them.

AI and machine learning for .NET developers: Don't settle for a black box that fires out random answers

For a .NET developer, AI and Machine Learning are essential: with ML you can track decisions, avoiding uncontrollable responses.

How programming with AI breaks down complexities: the proven method

Programming with AI gives you the right tools for every project. Strategies to eliminate hidden errors and master the code.

Learn about computer vision for image processing and how to teach a machine to really see

Learn to use computer vision and image processing with real-world examples, effective techniques, and real-world applications.

With XAI you can really understand what really happens inside the AI

XAI is key to interpreting your AI decisions and building trust in predictive models.

Where is artificial intelligence at and where will general AI arrive soon?

An in-depth analysis of modern AI and the path to AGI: what it can do today, how it is tested, and what awaits us in the coming years.

LLMs understand everything, except what you don't know to ask for

Behind every brilliant answer there is an LLM that you can learn to exploit. Choose not to suffer it, but use it with awareness

Using artificial intelligence in an advanced way: the mind leads, technology obeys

Find out how to use artificial intelligence in an advanced way. A mental and operational method for those who think, create and guide technology

The Future of Programming: The Skills All Developers Must Have (Or They'll Be Out of the Game)!

The future of programming is changing with artificial intelligence: which jobs are at risk and how to stay competitive in the new scenario

Will Artificial Intelligence replace programmers? The truth about the future of coding

Are you worried that Artificial Intelligence will replace programmers? Find out why your role is critical and how AI can become an ally.

How to become an artificial intelligence programmer from scratch to professional

Turn your passion into a tech career and discover how to become an artificial intelligence programmer.

Programming with AI: Deceptive Marketing or Your New Career?

Programming with artificial intelligence is useless. Discover with us why a programming course will guarantee you a better working future.

Mira Murati says goodbye to OpenAI: what her choice teaches future technology leaders like you

Find out why Mira Murati left OpenAI and how her experience can inspire you to become a successful software architect.

Artificial intelligence for programming: how to improve and accelerate your code

Learn how to use AI to write better code, automate repetitive tasks, and improve your development workflow.

Harness artificial intelligence and become an expert with the Microsoft AI course

Want to master .NET with AI? Discover the Microsoft AI Course and transform yourself into a sought-after professional in the industry.

The future of developers in the era of generative artificial intelligence in an in-depth analysis of the evolution of programming

Learn how generative AI is revolutionizing software development. Strategies to adapt and thrive in the age of AI

What LLMs are and how they will change the way we program and communicate forever

Find out what LLMs are and how they are revolutionizing programming and communication, forever transforming the way everyone works.

Develop with artificial intelligence to start programming in a simplified way!

Find out how developing AI can simplify your software development learning journey!

When LLMs become a real leverage point

LLMs become a real leverage point when they are connected to processes, data, and concrete use cases. Without integration they remain an impressive demo; with the right method they become assistants, semantic search engines, intelligent interfaces, and productivity multipliers for technical teams and companies.

Frequently asked questions

The most common integration is through Semantic Kernel, the Microsoft library that abstracts calls to OpenAI, Azure OpenAI, or local models. Alternatively, you can use the OpenAI SDK for .NET directly. The typical pattern involves a pipeline with memory, plugins, and model call orchestration, not a simple HTTP call.

Semantic Kernel is a Microsoft open source framework for orchestrating AI models in .NET, Python, and Java applications. Use it when you need to compose multiple model calls, manage conversational memory, integrate tools and plugins, or build autonomous agents. For single isolated calls, a direct SDK is simpler.

With .NET you can use GPT-4o and OpenAI models via the official SDK, Azure OpenAI models via Semantic Kernel, open source models like LLaMA or Mistral via Ollama locally, and any API compatible with the OpenAI standard. The choice depends on privacy requirements, latency, cost, and response quality in your specific domain.

Someone who knows AI understands where to place an LLM in the architecture without making it a bottleneck, how to manage token costs, when contextual generation is worth the latency trade-off, and how to fall back to deterministic logic when the model is unreliable. Those who do not tend to use AI as a decorative feature or build fragile dependencies.

Sources and references

Attention Is All You Need, Vaswani et al., 2017

The paper that introduced the Transformer architecture.

OpenAI developer resources

The official OpenAI documentation for GPT APIs, embeddings, and function calling. Essential for understanding the real limits of models, prompt structure, costs, and context management. I cite it because many articles on the subject skip exactly these technical details, which are the difference between a prototype and a system running in production.